(To get an understanding of what Exploration Day is, take a look at our first post here.)

The Theme - “In the Land of the Blind the One IDE is King”

Described as, “Design, Build, or Conceptualize a mobile app (iOS, Android, TV, Mobile Web, Watch) to aid in the daily life of those afflicted by loss of vision (total blindness or severe vision impairment).” While MartianCraft has no one on the team who suffers from this level of vision impairment, we constantly encourage our clients to consider accessibility not only in design elements, but in feature implementations as well.

The Projects

Blind Taps

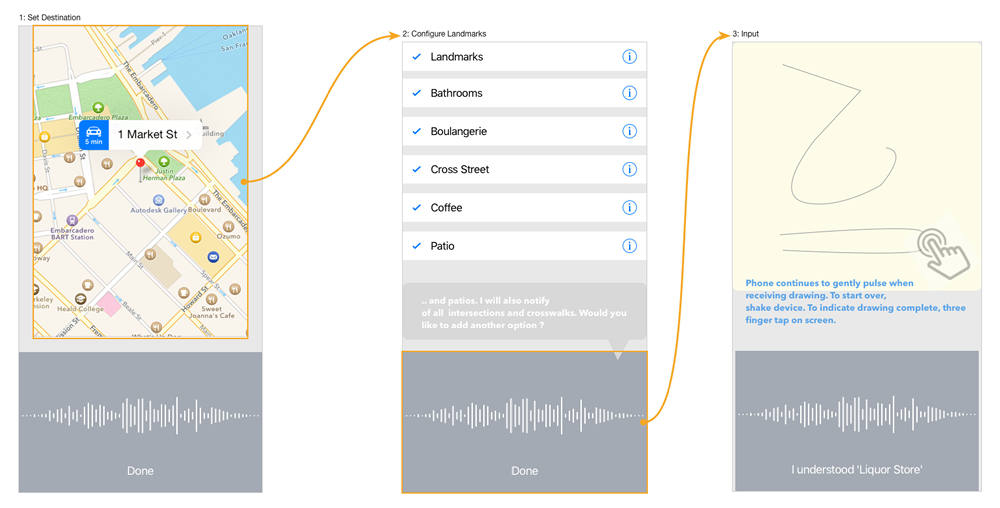

Comprised of two engineers and one project manager, this team focused on how the sense of touch can be integrated into methods of communication, and how that might work in an app for users with visual impairments.

The team explored use of tactile feedback using the taptic engine in Apple Watch. The example application developed by this team is a turn-by-turn map app that would accept gesture input to communicate a location along the route to a user’s destination.

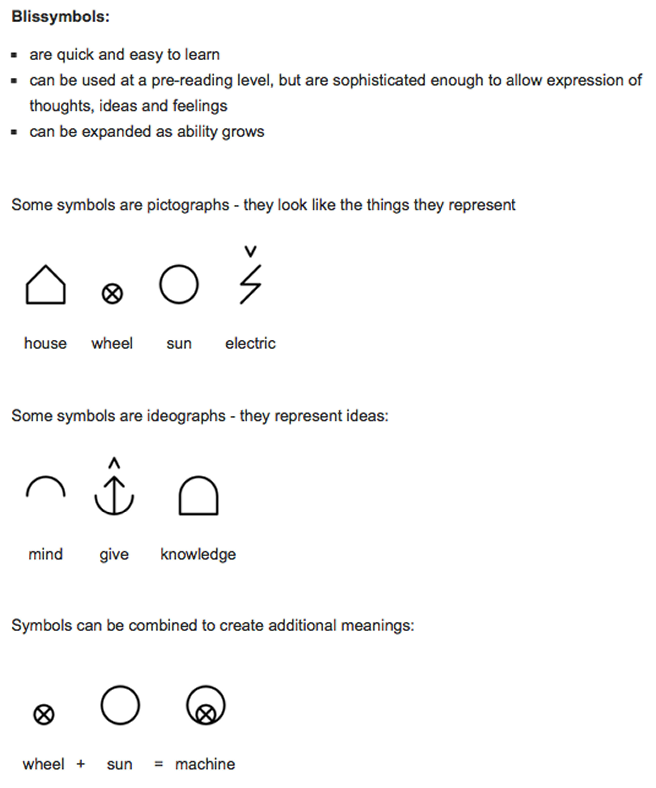

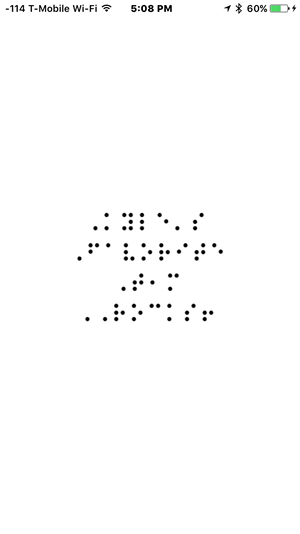

Gesture input is in the form of Blissymbols which is widely used by persons with severe speech and audiology impairments. This is an example of Blissymbols from Blissymolics.org.

The gesture, mapped to contextual meaning would alert the user by issuing a series of haptic responses at the moment the location is reached. The haptic response is unique for that landmark given context of the app.

Environmental Perception

Comprised of a project manager and three engineers, this team’s concept was to use facial recognition by taking it a step further to not only recognize multiple people in a capture, but to determine if the face or faces express some type of emotion. For example; are the people smiling indicating happiness, or are the people angry. If multiple people are found in the capture, perhaps there are also multiple emotions. The goal is to give someone without sight the sense of the mood in a room upon entering. The concept was to eventually move towards a live feedback mechanism (not needing to take a still image first).

The results wowed the crowd as it was presented and led to some comical demos.

The name of the app this team created is called Sensimo. There’s not much to look at, but here’s an image that was input, and what the app read out:

Siri reading back the results from the API, “You are most likely looking at a group of people standing outside of a building. There are no happy people in the frame, but there are one people displaying other emotions.”

Money Folder

Paper money in the USA are the same size, so many visually impaired people use a folding system to determine the bill denomination, but that also relies on trusting someone to tell you what the bill is to begin with. Using a camera to snap a photo of a bill and having voice feedback tell you the value of the bill was the goal of this app. This poses another problem: to get someone without sight to center the frame guides on the bill — this was solved by having the user pull the camera back until the camera sees the entire bill and then auto snap a picture. From there the app processes the image and reads back what the denomination is.

Walkie Talkie

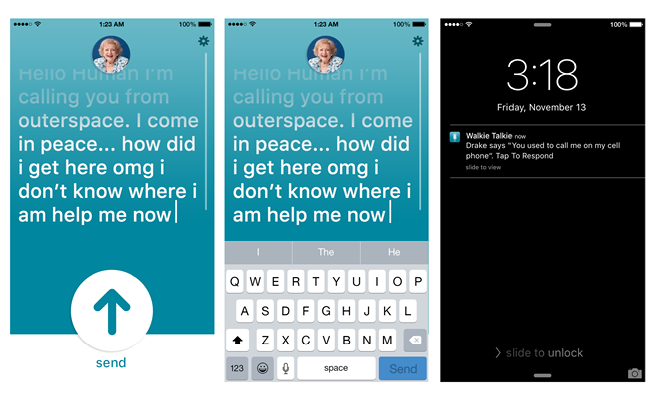

Another team with one designer and two engineers decided to target the ever popular chat applications. Their objective was to create an app for casual “walkie talkie” like communication which is all driven by voice — no need to look at the screen. For those with sight, using the app to communicate with others, the UI would be overly generous of color usage and text size.

Speak to send, and read on receipt.

Lists-n-Notes

Another team consisting of two developers wanted to make a note pad of sorts, a place where you would scribe short and on going notes — of course doing it without needing to see any of the app. Dictation would be started by tapping the screen with methods for playing back the entire note, or the recently added portions. As well as a way to indicate a new note, or appending a current note.

So…Lists-n-Notes; Because it “list’ns”, and makes lists and notes…get it?

When the app is launched, it prompts you with command help. The app tells you, “Say add note to add a note, list notes to list existing notes, …etc.” Tap the screen and the device enters “listening” mode, while it’s waiting for a command. After you speak the command, tap again to finish listening, then it obeys your command.

Since there has to be some screen, the developers decided to be whimsical with it. It’s not “functional”, except to accept the tap to invoke the listening mode.

The End of the Day

Each team came away from their 4 hour sprint with a laundry list of things still needing to be done, but an idea well formed in their heads. As a team we felt that all ideas were worthy of further development; albeit with some refinement. The hardest challenge was opening your mind to how you might craft an app to work in a manner which you are completely unaccustomed to work in - if I had no vision what would be important to me in a technology solution? Creating even the most basic of apps proved difficult and interesting at the same time. We managed to leverage quite a few APIs from both Google and Microsoft to help build out a robust and meaningful toolset.